FunctionGemma Announcement: Ushering in a New Era for Function-Calling AI Models

Artificial intelligence is rapidly evolving from mere conversation to actionable intelligence. On December 18, 2025, Google announced FunctionGemma, a specialized AI model built to bridge natural language and real-world actions with unprecedented reliability and privacy. As the latest member of the acclaimed Gemma model family, FunctionGemma marks a strategic leap for edge AI and function-calling agents, empowering developers and organizations to build smarter, privacy-first solutions across industries.

What Is FunctionGemma?

FunctionGemma is a fine-tuned variant of the Gemma 3 270M model, meticulously engineered for function calling—the ability to translate natural language requests into structured, executable API actions. Unlike conventional chatbots limited to dialogue, FunctionGemma acts as an “intelligent traffic controller,” efficiently handling commands at the device level and routing complex tasks to larger cloud-based models when needed.

This architecture positions FunctionGemma as a pivotal intermediary between users and software systems—capable of running independently for privacy-sensitive, offline use cases, or as part of a broader orchestrated AI ecosystem.

Technical Features and Innovations

- Unified Action and Chat: FunctionGemma seamlessly blends conversational fluency with the ability to generate structured, deterministic API calls, making it adept at both understanding users and executing tasks.

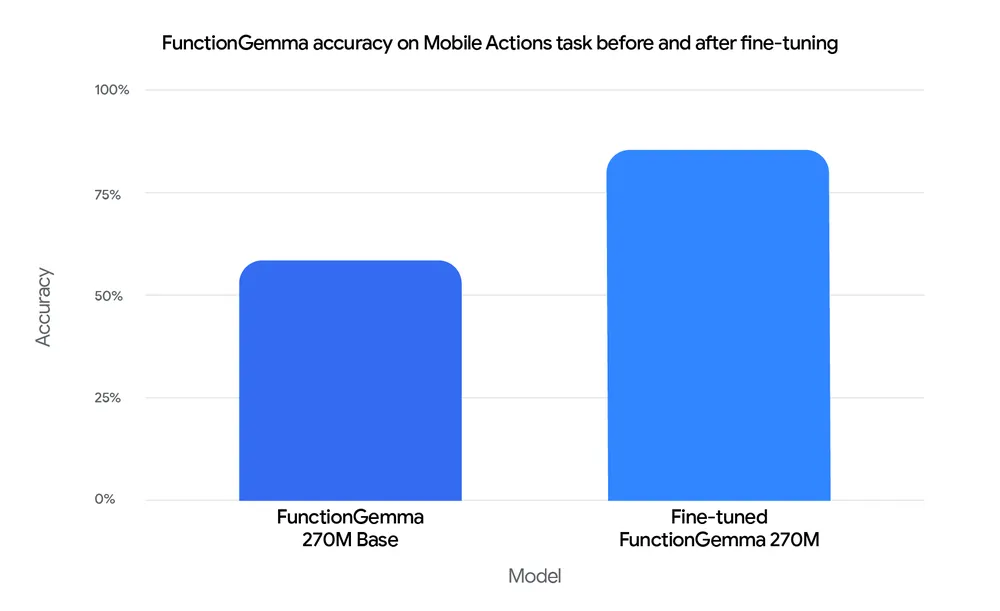

- Customizability: The model is designed for efficient fine-tuning. In Google's Mobile Actions benchmark, accuracy surged from 58% (baseline) to 85% after targeted training—demonstrating production-grade reliability for edge agents.

- Edge Optimization: At just 270 million parameters, FunctionGemma is compact enough to run on mobile devices and platforms like NVIDIA Jetson Nano, ensuring low-latency, on-device intelligence.

- Wide Ecosystem Support: Developers can fine-tune and deploy FunctionGemma using Hugging Face, Keras, NVIDIA NeMo, Vertex AI, and more, fostering broad adoption and easy integration.

- Efficient Tokenization: With a 256k-token vocabulary optimized for JSON and multilingual input, FunctionGemma achieves fast parsing and minimized latency while preserving user privacy.

- Offline Operation & Data Privacy: The model runs without network connectivity, enabling robust privacy for sensitive workflows and compliance-focused applications.

FunctionGemma in Practice: Key Use Cases

- Natural Language API Control: Enables intuitive voice or text commands for smart home devices, media apps, and navigation systems.

- Local-First Applications: Delivers instant, private responses for offline or bandwidth-constrained environments.

- Compound Systems: Functions as a lightweight edge model for routine tasks, while coordinating with more powerful models for advanced workflows.

- Interactive Games and Simulations: Powers features like the TinyGarden mini-game and local physics puzzle solving, demonstrating complex logic on mobile devices.

- Mobile Assistants: Facilitates offline, OS-level control for tasks like managing contacts or calendar events—without sending data to the cloud.

Demonstrations and Availability

Google showcases FunctionGemma’s capabilities in the AI Edge Gallery app, where users can experience an interactive mini-game and a developer challenge. The Mobile Actions demo highlights offline assistant functionality, parsing commands like “Add John to my contacts” or “Turn on the flashlight” and executing them locally.

Developers can access an open-source fine-tuning cookbook to adapt FunctionGemma for bespoke applications and deploy it on a variety of hardware. The model is available on platforms including Hugging Face and Keras, with comprehensive documentation and community support.

Technological and Business Impact

The release of FunctionGemma reflects a broader industry shift from “just chat” interfaces toward actionable AI agents capable of automating multi-step workflows with speed, privacy, and reliability. Its design supports enterprise-grade deployments, offering:

- Improved determinism and consistency via fine-tuning

- Offline operation for regulated sectors and privacy-sensitive use cases

- Scalable integration with both local and cloud AI infrastructure

These capabilities position FunctionGemma as a catalyst for innovation, empowering businesses to adopt AI-driven automation confidently and securely.

Summary and Future Outlook

FunctionGemma represents a significant milestone in the evolution of edge AI agents, enabling developers and enterprises to build smarter, more private, and reliable applications. As the Gemma model family continues to evolve, the integration of function-calling technology is expected to drive further innovation across the AI ecosystem.

Developers can experiment with FunctionGemma today through Google’s open-source resources and supported frameworks. With robust community engagement and an expanding suite of tools, FunctionGemma is poised to accelerate the adoption of actionable, privacy-first AI across industries.

Sources and Further Information

- Google Developer Blog: FunctionGemma announcement (December 18, 2025)

- Google AI Edge Gallery app and demos

- Related Google Gemini models: Gemini blog

- Community and technical forums, GitHub projects, fine-tuning tools: Hugging Face, Keras, NVIDIA NeMo

Comments (0)